Often when I’m working a project, I’ll work through a series of ideas before settling on something that fits the bill. I try to write down these ideas and keep them around on the off chance that I could utilize them for a future project. Not long ago, I was working on a different computer project, assessing the cooling requirements, and I thought to myself, “It sure would be a cool to build a fully functional, small scale wind tunnel as a case for a computer.”

Unfortunately, I didn’t have time to investigate this wind tunnel-computer idea, and it sat around in some distant corner of my brain, until I decided to build a new computer. A medical research computer that would donate its time to cancer research.

Project Conception

In 2011 I became actively interested in grid computing, and specifically in World Community Grid.

The idea that I could build a computer, or use existing computer resources and donate their power so scientists and researchers could process medical and humanitarian research was extremely interesting.

By donating computer processing time, you actively contribute towards a great cause. World Community Grid has numerous projects available; finding cures and treatments for cancer, AIDS, malaria, muscular dystrophy, etc.

As I became more interested I found myself connecting all the computers I had in my home. Eventually I decided I wanted to do even more; I wanted to build a computer that could donate all of its time to processing medical and research data. That project culminated in the creation of the Lego Folding Farm. This system housed three separate computer systems, all running in one giant Lego case. The system went online in July of 2011 and has been running 24/7 ever since.

In the past year, World Community Grid moved closer to enabling a GPU (Graphics Processing Unit) based project, the Help Conquer Cancer project. GPU computing allows a project to utilize the full processing power of the GPU. In most cases, a project written to run on a GPU is significantly faster than its CPU counterpart. In the Help Conquer Cancer project, a single CPU workunit took around an hour for my other computer to run, where the GPU enabled version completed in less than 10 minutes.

While the GPU version of Help Conquer Cancer project was being developed, I felt personally challenged to donate more towards cancer research. I’ve had people in my family affected by various forms of cancer, and I’ve always felt like I wanted do more to help. But beyond donating money to cancer causes or doing cancer walks (which are also a great way to help show support) it’s hard for regular folks like myself to feel like I’m helping contribute. It seemed like perfect timing that World Community Grid was bringing onboard a project that would allow for significant increases in the speed at which cancer research could be completed. I felt very compelled to actively pursue adding more resources towards this cause.

My Lego Folding Farm is a farm of CPUs and was built to process numerous different medical and humanitarian projects. At the time it was built there was not a GPU enabled project that ran on World Community Grid, so I focused all of my efforts on CPU processing power. The GPUs that the Lego folding farm uses are nothing special. While they can process a GPU enabled project they don’t really have a lot of processing power for that task. With that in mind I decided to build a GPU processing system that would solely dedicate its time towards cancer research.

I investigated the expenses to build and run this system and realized that the component costs would be high, as were the added electricity costs required to keep the system running 24/7. I had already added a significant expense 2 years ago with the electricity required to power the Lego folding farm. I wasn’t sure I could afford the cost of the system itself, as well as another electric bill increase.

Unable to solve the funding problem on my own, I turned to my resident creative genius department @thetinnishflash and explained the issue. After some discussion, we decided to try and build this system with donations from friends, family and others that wanted to be involved in the fight against cancer.

We turned to the fundraising site Indiegogo and setup a project campaign to raise funds to defer the costs of the components as well as the electricity costs. After a couple of weeks working we were able to raise a significant sum of money, almost enough to cover the costs of the components themselves! Outstanding! I’m still so very appreciative to all those who donated and helped contribute to this project happening, without their donations it would have been a hardship to complete.

Getting the of support others, with friends and family coming together to help make something happen, was my favorite part of this project. It’s a great feeling being involved and getting to build something that others have contributed towards.

Computer Planning

Once the fundraising was completed I began gathering up components for the build. I dubbed this project “Cancer Supercomputer” and I had fully intended on outfitting it with the components needed to be a GPU processing powerhouse. After hours of research I settled on a list of components.

CPU- Ivy Bridge 3770K

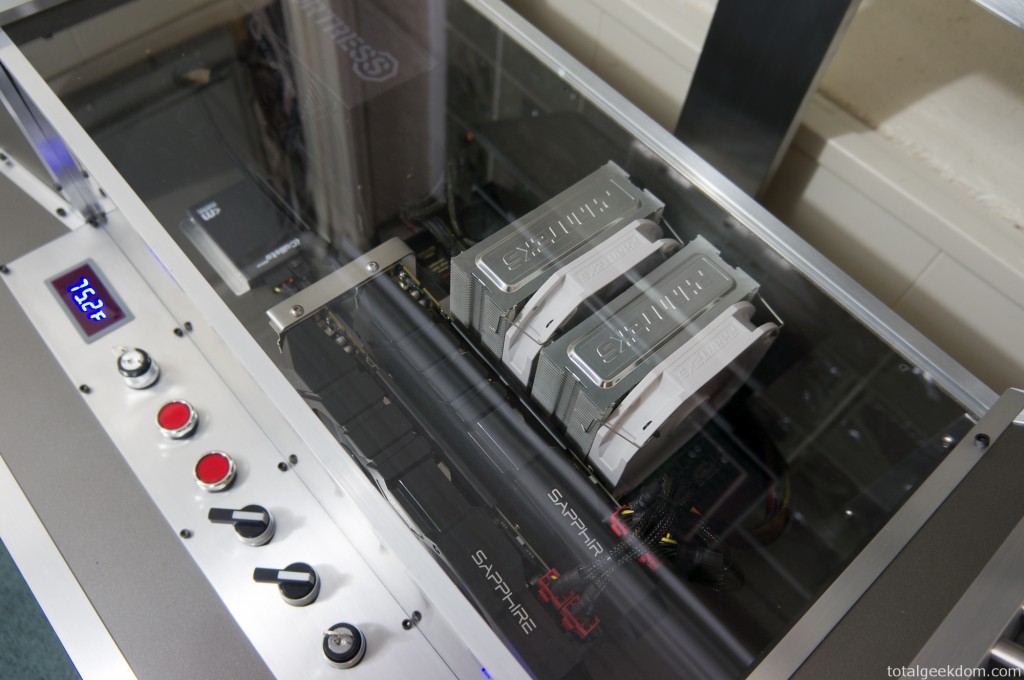

GPU- Radeon 7970 (Sapphire Dual-X cards)

RAM- Corsair Vengence 8GB 2133Mhz

SSD- Mushkin Callisto 40GB

PSU- Rosewill Fortress 650W Platinum

Motherboard- Gigabyte Sniper M3

CPU Cooler- Phanteks 140mm

My plan was to configure the computer and then overclock the hell of it. With this in mind, I started wondering what route I wanted to go as far as cooling. I debated going with water cooling, but decided instead to see how far I could push air cooling.

Case Design

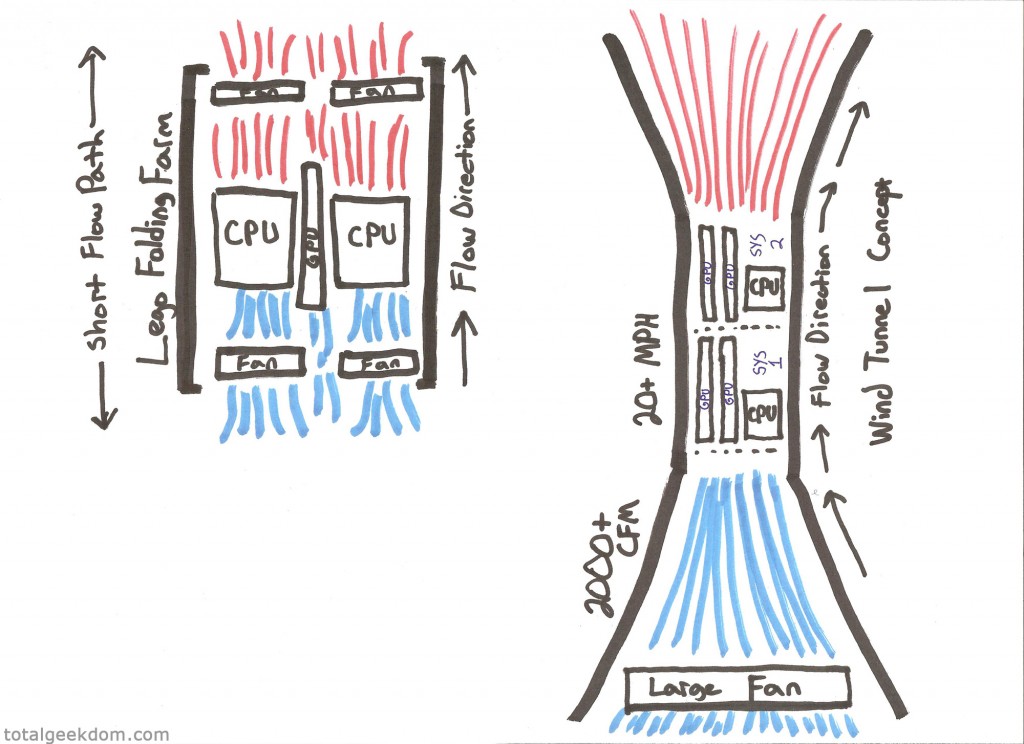

During the design phase of the Lego Folding Farm, I decided that the best method of cooling many hot components in a tight space was a very short path for airflow. Conventional computer cases generally intake air from the front which then travels through the case and rises upwards. Depending on the configuration there are numerous paths for the hot air to exit. There is almost always a rear exhaust fan, and in some cases a top mounted exhaust fan, as well. Additionally, some air will exit through the power supply fan. The problem I see with this method of air cooling is that the path that the airflow must take is long and winding.

To work around this, I built the Lego Folding Farm with a very short path from intake to exhaust. The intake fans sit directly in front of the components and blow air across the CPU cooler, the GPU cooler, the motherboards on both top and bottom, the heatsinks, the power regulation circuitry, the power supply. These are directly cooled by unobstructed airflow. The exhaust side is a mirror of the intake path, where air is directly exhausted out of the case. This method allowed for a much shorter path to get fresh, cool air in and blowing directly on the components.

However, with the Cancer Supercomputer I knew the graphics cards were very long and had very big coolers on them, so I needed to change my approach. I also knew from testing that under full load the GPUs could output a lot of heat, especially with multiple GPUs in a tight space. I needed a solution that allowed for more space, while also allowing me to move a lot of air.

Additionally, I wanted to pay close attention to air pressure. A conventional computer case has a lot of large “dead-space” areas. These areas don’t direct or force air to flow towards the components that are being cooled. I wanted to avoid dead space. I wanted the case to closely mirror the shape or profile of the finished motherboard with components. This way all the air flow would be forced to travel across the components surfaces, as opposed to flowing past large empty areas of case.

(My drawing skills are clearly unmatched)

Wind Tunnel Concept

Knowing I needed to maximize cooling, I explored a concept I’d tinkered with previously; a wind tunnel computer case. I had done research on wind tunnels back when I first investigated using a wind tunnel to cool components. There was a lot of information, much of it only helpful to those with a physics background. I’m not a physicist, but given enough time I can usually understand theories well enough to apply them in the real world and test them out, and that was the case here.

I used an anemometer (velocity/airspeed meter) for testing, as well as different fans and basic shapes made out of cardboard. I was able to conclude that I could increase air velocity through a scale wind tunnel.

There are two types of wind tunnels; the one I’ve built is a subsonic wind tunnel. This type of design involves a contraction section which is used to increase velocity (airspeed) through the test section. This increase in airspeed was what I looking for, a way to increase airspeed over the computer components.

There are numerous factors to consider when it comes to wind tunnel design and testing, things like Reynolds numbers, turbulence, boundary layer air, and heat from surface friction. I did extensive testing with various designs, materials and configurations until I settled on a final design.

In a perfect world I would have been able to build a larger wind tunnel. There were many constraints on this project to make it workable. The biggest of these was space. I’ve slowly converted my basement to a lab with toys, computers, Lego, robots and all kinds of various geekery. Therefore, I only had one area with enough space to construct a wind tunnel. The space itself was about 72″ long by about 26″ wide, and whatever I was going to build had to fit within those confines.

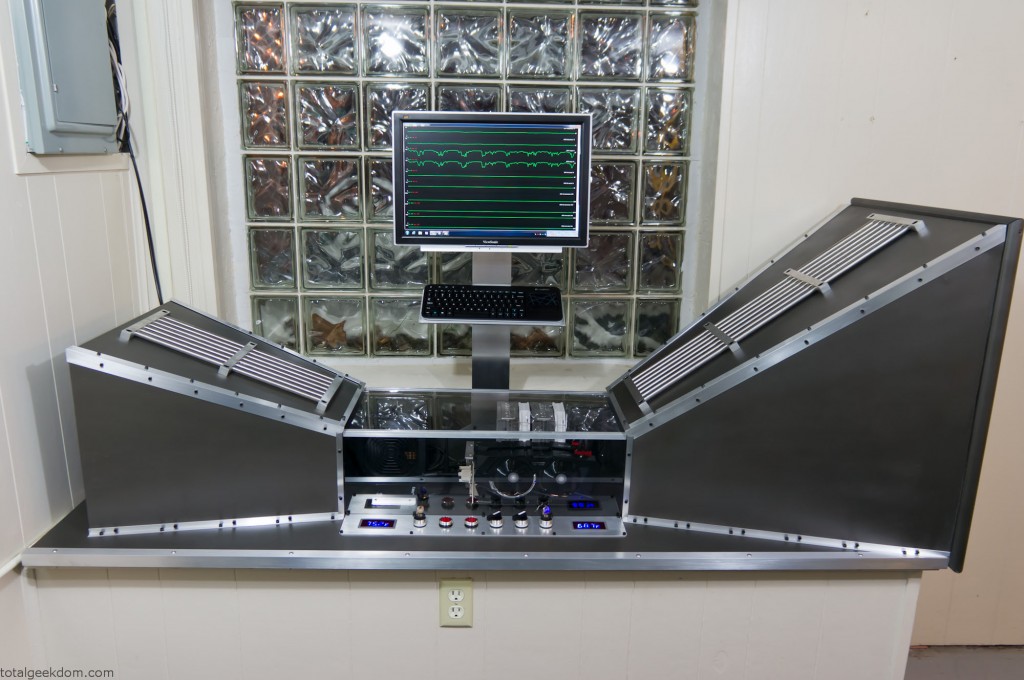

Next, I needed to fit computer components in the test section of the wind tunnel. I needed the test/contraction section to be big enough to accommodate the motherboard/CPU cooler/GPUs and also fit the power supply and a SSD. After tinkering with several designs I settled on a shape and size that allowed room for further growth. The contraction section of this wind tunnel has enough space for two full mATX motherboards plus the PSU. This allows for the possibility of adding another system in the future and cooling two systems inside one wind tunnel.

Next I had to contend with fan noise, as I would with any other air cooled pc. I debated using various sized PC fans and configuring them in a grid pattern at the inlet. Using fans around 220mm would’ve allowed for multiple fans at the inlet without too much noise. But when testing those fans I found that the airflow and CFM (cubic feet minute) produced weren’t adequate. At that point I changed direction and decided that I would use a single large fan with multiple speeds. This would produce a much higher airspeed as well much more CFM. I tested a couple of different fans and settled in on a box fan. It only consumed 96 mA and allowed for peak flow in the 2200CFM range with air speeds of 14-15MPH.

Wind Tunnel Construction

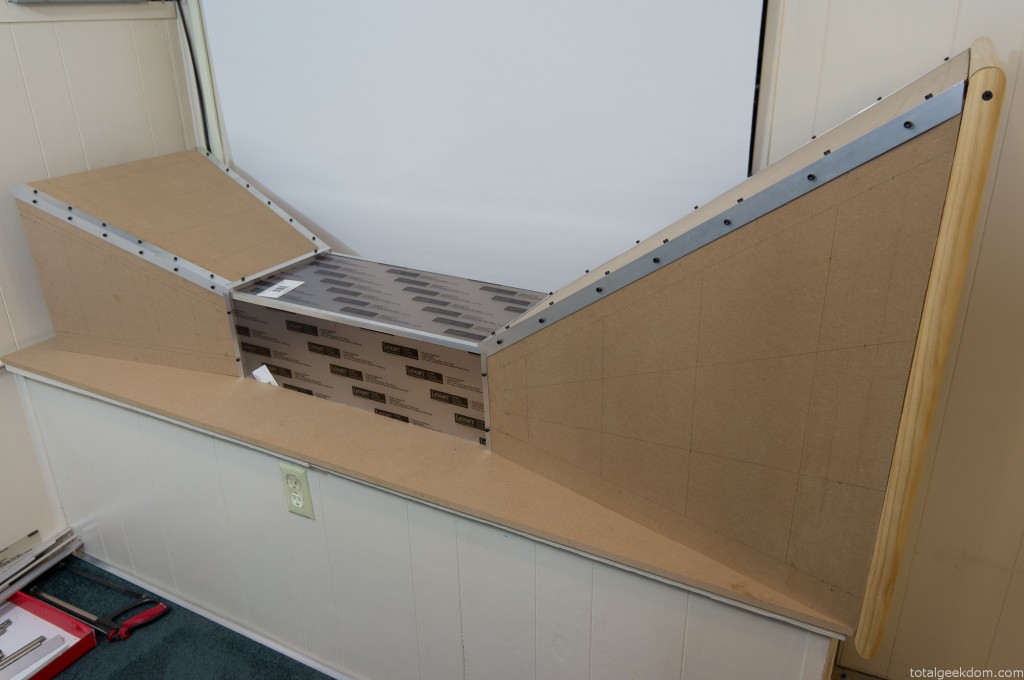

I looked at a couple of different options for building materials. I debated using sheet metal, and then looked at plastics. At one point I was going to make everything out of carbon fiber as I had some spare fabric, but the fact that carbon fiber is conductive dissuaded me, as the computer components are relatively sensitive to static discharge. In the end I choose wood, specifically 1/2” MDF board composite. It offered a nice surface to finish and paint and was strong enough to build the entire tunnel structure.

I had a fair amount of MDF board in my garage and that made for an excellent basis to start. I cut out the basic shapes for the inlet and outlet as well as the back panel of the contraction section. I also cut an entire piece that would serve as the base.

After gathering the pieces I screwed everything together to form the basic shapes needed to mock everything up.

Once I had the basic shape configured I went about tweaking and adjusting the layout of the pieces. Next, I moved on testing the inlet section with the fan to verify.

Then I moved over to working on all the finishing and edge pieces. I bought some cheap pieces of angle aluminum from the hardware store and cut and fit them at all the edges. I wanted a transparent section for the contraction/test section where the computer was going to reside. I used a couple of pieces of Lexan, cut to size to form a transparent window and top section.

Next, I moved onto prepping and painting all the pieces. I prepped the MDF for paint by using a sealer. From there I sanded the surface smooth before painting. I went for a darker metal color called anodized bronze. After some initial mockup with the painted pieces I later sanded them to achieve a smoother finish.

Switch/Gauge Panel

Once all the pieces were in place and the basic mockup was done I moved onto the wiring and gauge/switch assembly. I wanted a series of switches I could use to control the various functions of the wind tunnel and computer. I also wanted switches in place for future expansion so I could add a second computer.

I found some nice quality switches online, for cheap. There are 6 total switches. The first two switches with big red buttons are momentary switches that turn on the computers. (Only one used now, the second for a future computer)

The lever switches control both the fan and the power to the LED temperature gauges

The key switches are wired to the computer power switches and also to the fan switches. The key switches prevent anyone from turning anything off, but most importantly of all, I always wanted two key switches so that I could pretend I had a nuclear submarine that required two keys in order to launch. I always use my Sean Connery accent when using the key switches in order to maintain authenticity.

I also added some nice LED temperature gauges that I found online for cheap. They have remote probes and only require a 12v power source. I placed them on the ends of the panel so that I could easily monitor the inlet and exhaust temperatures of the wind tunnel. For power I used an old Dell 12v speaker power adapter and rewired it to the switch to provide power to the temp gauges.

The panel is a piece of aluminum that I cut holes in, to which I mounted all the gauges and switches. I’m pretty happy with how it all turned out. All the power, plug-ins and connections are beneath the switch/gauge panel.

Trimwork/Aluminum Edging

All the angled aluminum is secured by 10-24 screws which I drilled and tapped into the MDF. It’s surprisingly a very strong combo. I would recommend using a step down on the drill size when you tap into MDF as it’s not as robust as actual wood. After what felt like days upon days of drilling and tapping I finally finished installing all the trim and screws.

The aluminum edging itself was polished with varying grits of sandpaper to achieve a brushed look. The edging at the bottom of the inlet and exhaust sections firmly holds both sections in place.

The aluminum braces with steel tubes that are on the top of the intake and exhaust sections are decorative. I picked up some extra scrap stuff from the metal yard for another $5 dollars. The stainless tubes contrast nicely with the brushed aluminum.

Monitor/Keyboard Stand

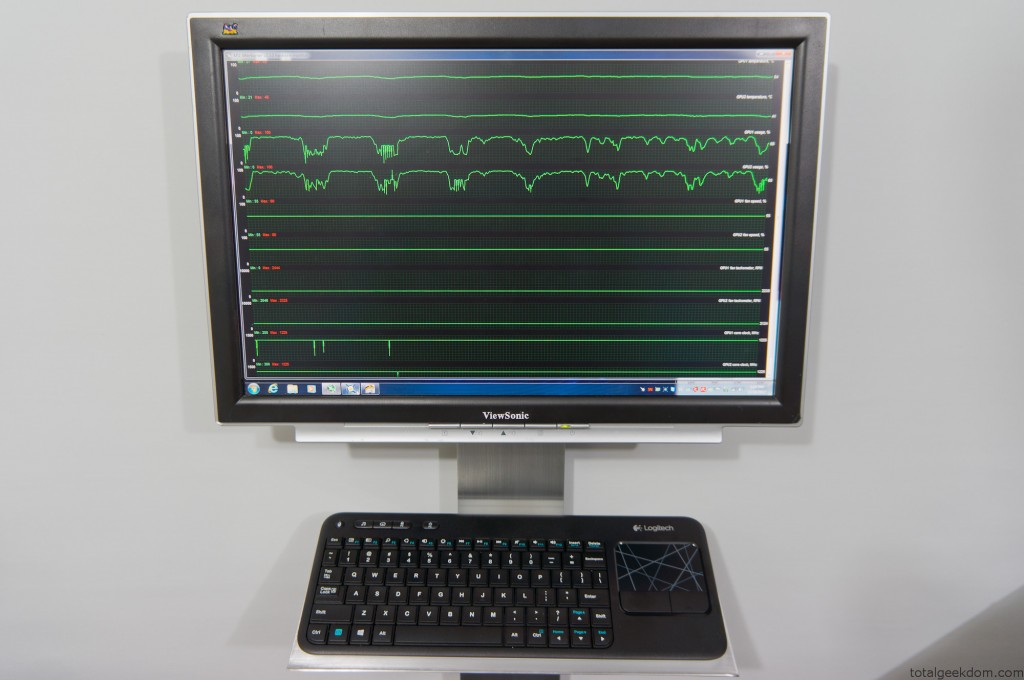

I debated buying a monitor stand, but since I was attempting to do this as cheaply as possible, I thought it might be a better idea to make my own. At the metal salvage yard I had purchased a junk, cutoff piece of aluminum for $5. Using that piece, I machined a stand to mount the monitor to, and then attached a tray I made for the keyboard.

The monitor itself was purchased on eBay and only cost $15 because it was broken. After adding a couple new capacitors it worked just fine. It’s a 20″ viewsonic.

Wind Tunnel Air Intake

I designed the air intake section to try and minimize fan noise. It seemed moving the fan deeper inside the intake section would cancel out a little bit of the blade noise. Additionally, I added a couple of layers of open grating which helped to minimize fan noise. With a decibel meter I measure around 65-67 decibels, which is significantly quieter than the 75-77 decibels that the Lego folding farm measures.

The fan sits about 4 inches inboard of the intake. I used some molding to form a radius at the inlet to smooth the airflow coming in around the inlet opening. I used some open “egg-crate” style plastic drop-ceiling panels, cut them into sections and placed them in front of the fan. For the intake opening I used more of the ½” angle aluminum, cut a bunch of pieces and placed them with the corner facing out. I spaced them very close together allowing a small 1/8” or so space between them.

This minimizes airflow restriction, because of the very large surface area of the intake inlet.

Airflow Testing

Using my anemometer (wind speed tester) I’ve been able to record airspeeds and velocities. The following numbers were measured with the fan set to its lowest setting

Inlet Air Speed- 0.6 MPH or .88 ft/s (Measured at inlet grill)

Exhaust Air Speed- 1.4 MPH or 2.05 ft/s (Measured at exhaust opening)

Fan Output Air Speed- 5.0 MPH or 7.33 ft/s (Measured free standing, no tunnel)

Contraction Section (Without Computer) Air Speed– 12 MPH or 17.6 ft/s (Unobstructed flow- Empty Test Section)

Contraction Section (With Computer) Air Speed- 9 MPH or 13.2 ft/s (Obstructed Airflow)

CFM (Cubic Feet Minute)- 1200 CFM

This data shows that the basic design of the wind tunnel (inlet, contraction and exhaust section) does an excellent job at increasing air velocity.

The gain in airspeed from the shape of the tunnel is around 240%. This is a very low speed example of the potential of a subsonic wind tunnel. I also tested the fan at its highest setting and recorded air speeds of around 26-30mph through the contraction/computer section of the tunnel. This is just with a cheap box fan. Adding a higher speed fan would possibly allow for greater velocities, though the cooling benefits on the computer components would need to be tested at such high speeds.

The goal is removing as much heat as possible from the computer components. There is an ideal point where airflow would be moving just fast enough for heat transfer to be most efficient.

Airflow & Fan Electricity Efficiencies

Another interesting point is the efficiency of one large fan as opposed to numerous smaller fans. Comparing the wattage, costs, and flow reveals the following:

Single Box Fan

1200 CFM = 96 Watts

Purchase Cost- $20

To replicate 1200 CFM, I would need roughly 15 smaller 120mm (Based on Scythe Gentle Typhoon 120)

Scythe Gentle Typhoon (Single Fan)

80 CFM = 12 Watts

Purchase Cost– $20

Scythe Gentle Typhoon (15 Fans)

1200 CFM = 180 Watts

Purchase Cost= $300

Stepping up to an 200mm fan would require roughly 10 fans (Antec Big Boy)

Antec Big Boy (Single Fan)

120 CFM = 36 Watts

Purchase Cost– $15

Antec Big Boy (10 Fans)

1200 CFM = 360 Watts

Purchase Cost= $150

Using a single larger fan cuts costs considerably. The box fan is roughly half the wattage of the 120mm fan arrangement and uses roughly one quarter the power of the 200mm fan arrangement. The initial investment cost of the box fan is also considerably cheaper than buying numerous smaller fans.

While its not possible to use a box fan with most computer builds, in this application where large airflow volumes are required it’s clearly a superior arrangement. The numbers listed above are with the box fan on the lowest setting, the smaller computer fan numbers are all listed at their max setting. Turning the box fan up to the highest setting allows for over 2000 CFM of airflow.

(CFM and Wattage Data on Scythe and Antec fans pulled from – Xbitlabs & Bit-Tech)

Smoke Testing

Additionally, I wanted to test flow patterns and turbulence around the computer components. In order to do this I needed a way to visualize the path of the air. Just like in a full scale wind tunnel this can be accomplished by adding smoke to the air. I purchased some smoke sticks (I used smoke testing sticks for HVAC systems, as they are non-toxic and leave no residue) that release smoke at a controlled rate. This allowed me to add smoke into the flow path and watch the air path as it moved around and through the components.

My plan was to test the system in standard configuration with the fans in place on the CPU and GPU coolers. This was accomplished with smoke tracing and produced some interesting results. I thought at lower speeds that the smaller fans on the coolers would restrict airflow. However, testing showed that at lower tunnel speeds (5-10MPH) very little turbulence was created. At higher tunnel speeds (15+ MPH) there were small vortices that would appear around the coolers, and as speeds increased the vortices became unpredictable during testing.

My next step is to test the system with passive cooling of the GPU and CPU. I’m curious if the airflow will pass through the CPU/GPU coolers, or if the airflow will move around it instead. More testing is needed.

Computer System

The motherboard is mounted to standoffs which sit on top of the surface of the tunnel floor; however, only two of these are actually secured. This allows me to move the motherboard around if I need to, without having to drill a bunch of holes. The GPUs are mounted in the motherboard and sit vertically which is better as there is less weight on the PCIe slots. I made a small angle aluminum bracket which mounts to the wind tunnel floor and holds the graphics cards securely in place.

Overclocking

The GPUs are overclocked using a combination of software utilities which allows for voltage, power, temperature, frequency and fan control. At a stock voltage of 1174 mV I was able to push the core clock to 1125Mhz with ease. The temps stayed in the 48-50 °C range on GPU 1 and in the 40-42 °C range on GPU 2. Pushing further, I increased the voltage to 1220 mV and achieved a core clock of 1225 MHz with temps increasing 4-6 °C degrees. Given the temperature and voltage headroom still left on the cards, I plan to push both further and see what kind of clocks I can get too.

(For this application the GPU memory is actually downclocked as it has no effect on the processing of research tasks)

GPU Test Numbers

First Overclock Settings

GPU 1

Core Clock- 1125 MHz

Core Voltage- 1174 mV

Core Temp- 41 ˚C

VRM Temps- 41-43 ˚C

GPU2

Core Clock- 1125 MHz

Core Voltage- 1174 mV

Core Temp- 49 ˚C

VRM Temps- 45-47 ˚C

Second Overclock Settings

GPU 1

Core Clock- 1225 MHz

Core Voltage- 1220 mV

Core Temp- 46 ˚C

VRM Temps- 46-47 ˚C

GPU2

Core Clock- 1225 MHz

Core Voltage- 1220 mV

Core Temp- 56 ˚C

VRM Temps- 52-53 ˚C

I was hoping to get to 1275-1300 on the core clock and keep the voltage under 1300 mV. That’s my goal for the next test session; I think with the temperature headroom I have I might just be able to get there.

CPU Overclocking

Overclocking on the Intel Ivy Bridge 3770k platform has been a learning experience. Unlike the Sandy Bridge 2600k CPUs I worked with previously, there is a much harder voltage wall with Ivy Bridge. Additionally, the thermal interface material (TIM) and attachment method of the Ivy Bridge heatspreader are different than the Sandy Bridge CPUs. Through testing it has been confirmed that large reductions in temps can be achieved by “delidding” an Ivy Bridge 3770k CPU.

This process involves carefully removing the factory applied heatspreader with a blade, and replacing the thermal compound that Intel used with something of superior quality. It appears that the real reason a large reduction in temperatures occur has to do with the reduction in the gap between the die of the CPU and the heatspreader itself. Temperature drops of 20-25 °C are not unheard of with “delidding” a 3770k.

With that knowledge I fully intend on delidding my 3770k CPU so that I can squeeze every last bit of processing power it has to offer. However, I’ve been focused on finalizing the wind tunnel portion of the project and getting everything up and running. So I’ll delid the CPU in the next couple of weeks, when time permits. Currently I’ve overclocked the CPU to 4.5GHz and left it there for now though I plan to push it further.

CPU Settings

Frequency- 4.5 GHz

Voltage- 1.26

Temperature- 63-65 ˚C (Depending on ambient)

Judging by the current temperatures and voltage used to achieve 4.5 GHz, I hope to be able to achieve 4.8 GHz, possibly even higher. I plan on pushing hard to see what I can get. I don’t think the temperatures will be an issue, it will be a matter of voltage required to achieve those clocks.

System Performance

Currently, the system has exceeded my initial estimates for output. The goal was to process as much cancer research as quickly as possible.

With the power of the GPUs, multiple tasks/workunits from the Help Conquer Cancer project are able to run concurrently. The average GPU can run 1, sometimes 2 workunits. A higher end GPU can run 2-4 workunits and sometimes 4-8. However, a balance must be struck with the number of units running and the total time it takes to run each unit.

Each GPU can run 8+ workunits; and currently I’m running 10 on each GPU for a total of 20. I’m still testing the number of workunits to run per card for maximum efficiency. At this pace I can process 40 workunits in the time it takes my other computers to process just one.

World Community Grid awards points as a way of keeping track of the work contributed and to help motivate others to join and donate time. The points are worth nothing, they just give you a way to compare what you’re donating in terms of processing power.

Each computer connected to World Community Grid is assigned a host number. There are over 1.6 million hosts registered and of those there are 220,000 active hosts. (Numbers pulled from BOINCstats data) Of those 220,000 active hosts this wind tunnel computer has been in the top 5 for a number of days and has been as high as 2nd position in most points returned for a single host in one day.

On top of this World Community Grid also awards hours of processing time donated. Each CPU thread is equivalent to one day’s worth of time donated. The more CPU cores/threads you have the more days of processing completed at once. For example, a 4 core processor would achieve around 4 days’ worth of processing time in a single day, an 8 core would achieve 8 days, and so on. This computer running 20 total tasks simultaneously achieves around 20 or so days of processing time in just one day.

From November 2012 to November 2013 this system was online and processing workunits for the Help Conquer Cancer project. In that year timeframe it returned over 600,000 workunits and processed over 11 years with of computational time.

System Stats

Workunits Per Day- 4,000-4,200 double units (8,000-8,400 single workunits)

Run Time Per Day- 20 days (worth of processing)

Total Run Time Donated- 11 Years, 275 days

Total HCC (Help Conque Cancer) Results Returned- 600,000 (as of 10/1/14)

Future Plan

After a lot of further testing with the system, delidding the CPU and modifying flow paths, I was very happy with the overall result. The Help Conquer Cancer project finished well ahead of it’s projected finish date and I was very happy to have helped contribute. The system is still used for testing purposes and will be used for further testing in the future.

Comments 98

Pingback: L'ordinateur de la soufflerie qui espère vaincre le cancer - High-teK.ca

Pingback: Wind tunnel PC case pushes the air cooling envelope, does its thing for cancer research - Digital Reviews Network

Pingback: Wind tunnel PC case pushes the air cooling envelope, does its thing for cancer research - Digital Reviews Network

Pingback: La computadora del túnel de viento que espera conquistar el cáncer

Pingback: Homepage

Pingback: Corner Ceiling Fan | Stunning Ceiling Design Ideas - Best Ceiling Decor & Painting

Pingback: Mini Fridge Computer Case – Best Automotive Magazine Online

Pingback: Pc Case Desk – sa4mayor.com

Pingback: The top 20 Ideas About Diy Wind Tunnel - Best Collections Ever | Home Decor | DIY Crafts | Coloring | Birthday | Ideas

Pingback: Wholesale Jerseys

Pingback: Wholesale Soccer Jerseys China

Pingback: Wholesale Hockey Jerseys From China

Pingback: How To Make A Cheap Wind Tunnel | Wheel Drive

Pingback: Lego Computer | Total Geekdom

Fantastic build. I think I am going to throw something like together over the next month or so.

Personally, I have always wanted to visual the flow patterns my components in my custom cases I modify (730x.org/h2c, I modify the factory Dell TEC units with aftermarket watercooling units – yes, Dell actually has used to watercooling and Thermal Electric Cooling for gaming systems).

But, i have never found an acceptable solution for the “smoke sticks” as, like you said, most leave residues or smells. Imagine how excited I was to read that you use them with HVAC systems! That makes perfect sense! You don’t want residue in people’s houses.

So where can I get some of these smoke sticks?

Thanks again!

Pingback: Wind Tunnel Computer | Network Extras

Pingback: The wind tunnel computer that hopes to conquer cancer | DMTassinari Associates

u should get bigger screen 😛 LOL

Very cool build!

Just a heads up: cooling is a function of flow rate, not velocity. Therefore, you could get the same amount of cooling in a straight duct as you are with the “wind tunnel”. It looks like the wind tunnel does have a purpose in concentrating the air movement across the components.

That and it looks rad!

Thanks!

Correct, one of the reasons I wanted to use the wind tunnel was to build a focused chamber that would force all the air to flow over the components. I wanted to ensure that all the components would have direct airflow.

The flow rate versus velocity was something that was quite interesting to me, and was an influencing factor in wanting to build the wind tunnel so that I could test different air velocities and flow rates. I didn’t want to use a higher speed fan to achieve the the higher airspeeds as the trade-off in noise would have been undesirable. That’s why I liked the idea of the wind tunnel as it let me increase the air velocity and still use a relatively standard box fan.

I did a lot of reading of both Ake Malhammar and Sun Jim Kin. Their books, papers and publications offer a wealth of knowledge on the subject. There were tests and studies in relations to airflow velocity and cooling in various forced convection and passive convection setups. Real good info.

I became very interested in the affect of boundary layer air as well as turbulence and its effect on cooling performance and efficiency. The higher air velocities afforded by the wind tunnel have been a good test tool in looking at the effects of turbulence and cooling. I’ve done so much smoke testing at this point that I’m starting to wonder if I should have bought stock in the smoke pellet industry. haha

Thanks for you insights and info! 🙂

This is an outstanding set up! I’ve been crunching with WCG for a little over 2 months now on a simple HP laptop and have been looking for a set up that I could put together as a stand alone computer dedicated to the same project you are currently working on. Do you have specs drawn up for the tunnel and case that you wouldn’t mind sharing? I’d love to try and duplicate what you’ve managed here. Keep up the great work!

Thank you

Sounds like you are already helping and doing a great job. 🙂

I don’t really have a solid set of specs drawn up, most of my initial planning was done as small little doodles and drawings of the concept. But I would be happy to measure up what I have built and send that to you so you could build your own from those specs. The more systems we’ve got online the better. 🙂

Thanks!

You are offically my idol…. what about joining “dott ari” team with your great fire power ?

haha. Thanks. I’m not really worthy of idol material, but I appreciate it.

Currently I’m a member of a different team, they’ve helped me a lot with World Community Grid and getting up to speed. So I’m gonna stay put as I definitely owe them for all that help. But thanks for the offer! 🙂

Give yourself some more room on the exhaust bro, or better yet, H70.

More exhaust room would have been nice, but the space was limited so I had to work within the confines available. The design of the wind tunnel and the way the exhaust/diffuser works is to return the decompressed air back to the state it was in when it entered the inlet.

By the time the air exits the mouth of the diffuser/exhaust it’s moving pretty slowly and the space between the wall doesn’t seem to pose any restriction.

I debated for a long time on going with an H80 or H100 and looked at a lot of reviews. I used this review of the Phanteks and the H100 as info towards that decision-

http://www.overclock.net/t/1322094/corsair-h100-vs-phanteks-ph-tc14pe-vs-therlmalright-ultra-120-rev-c-user-review

As you can see the difference between the H100 and Phanteks is around 2.5C. So they were fairly close, I still would have liked to have that last little bit, but in the end I became very concerned about the durability of the H100. They seemed plagued with pump failures and had a lot of issues, and I didn’t want to take the chance of having the pump fail, I knew the air cooler with its simpler design should be more durable. So I decided to stay with air cooling.

That’s a super cool project (pun intended 😉 ) Featured on Modmag – http://en.modmag.net/news/wind-tunnel-computer-by-mike-schropp

Thanks!

Awesome, looks great. Appreciate it! 🙂

Well, I don’t know if IBM was first but it really sounds like Folding@Home. Anyways, great job! I might end up making something like this for a server rig I’m making soon. Though, wouldn’t the placement of the exhaust so close to the wall block quite much of the air?

Thanks! Yeah, both World Community Grid and F@H are excellent causes.

It’s somewhat deceiving in the pictures just how far from the wall the inlet actually is located, it’s roughly 5″ away. The wall doesn’t really pose much restriction, if any. The reason being is the airflow coming out of the exhaust/diffuser section is actually moving quite slowly by the time it reaches the mouth or exit.

Inside the tunnel the highest airspeeds are in the contraction section. So if the contraction section has an airspeed of let’s say 10 MPH then the exhaust will only have an airspeed of around 0.5-1.5MPH, which is pretty slow.

The large area of the inlet/intake allows for relatively low airspeed entering the intake, this larger volume of air is only being drawn in around 0.5-1.0 MPH, then the shape of the tunnel allows that large volume of air to be forced through the smaller contraction section, which causes the increase in air velocity. There are factors that come into play such as pumping loses and frictional loses in efficiency when doing this, but the result is roughly the same.

It’s more simply put though that the same mass (air) entering the inlet has to exit the exhaust side. When the air speeds up through the tunnel contraction section it’s being decompressed, the faster moving air has a lower pressure. Then when this air hits the exhaust the purpose of the diffuser is to slowly transition the air back to it’s original pressure. If there was no exhaust diffuser and you just let air exit right at the contraction section at high speed you would have a very sudden change and this would result in losses throughout the system.

So by the time the air exits the mouth of the diffuser it’s almost returned to it’s original state and is traveling quite slowly. So the wall poses little restriction in this case.

Why not from bottom (cool air) to top (hot air) flow direction?

Honestly I don’t have a very scientific answer to that question. My guesstimate however has to do with airspeed. In a normal computer case where airflow might not move all that quickly I think the movement of “heat” or “hot air” is something that must be taken into count. I guess this would most similarly relate to convection on some levels. Though I think in its basic form its just a matter of thermal equilibrium.

But in this specific instance I think the speed or air velocity is a factor. Given the fact that the airspeed or air velocity is quite high in comparison to a regular computer case I don’t think there is enough time for thermal equilibrium to be a factor. The air is moving through the contraction section so quickly that any heat exchange that happens between the air and components is all there is time for. New air so quickly replaces the air that has just been “heated” that there is always a new supply of fresh air.

I tried to use the terms loosely. Technically I believe the thermal equilibrium of the air is directly tied into the speed of which the particles are interacting with each other, lighter faster particles moving quicker in an upward motion, and heavier cooler particles not rising as quickly. I’m not a physicist or anything though, just conjecture from reading and trying to learn myself.

Again though, I think the airspeed prevents any of this from happening. But I could be wrong.

Nice work, I tried something like that…well just tried, actually my plan was to use phase change cooling in conjunction with forced aspiration, it quite didn’t work when I started to implement it, first, it was getting costly, second, the waste heat management was complicating things. And it was getting relatively large in size, considering I wanted to make an extension to my present cabinet. Then I tried to replace the concept of phase change to passive cooling, using ice, which kind of worked at start(just a small duct to sealed cabinet and exhaust fans at top. The net drop was around 4 to 5 degree Celsius, but the problem of moisture and constantly replacing the cold reservoir made this solution impractical too. Your solution is just awesome, sans the size. Anyways, great work for great cause 🙂

This sounds like a pretty interesting concept. Did you take any pictures or anything showing it? I would definitely be interested in seeing. I understand that there was some impracticality hurdles you ran into, but it’s still an interesting concept.

Thanks btw! The size is a little on the big size, or way on the big size in comparison to a regular PC case, but it’s been a fun project and I’m learning a ton as I go. Thanks. 🙂

Very awesome design and build Totalgeek!!! I also am an avid WCG cruncher and belong to one of the best WCG teams on the net………LOL I know, its a personal opinion. I have been crunching now for around 5 years. Thanks to all that crunch for this awesome cause.

Thanks!

haha. No worries, each and every team offers a great contribution to the cause. No matter how small or big, if you’re crunching for the cause you’re making a difference. 🙂

Crunch on!

Pingback: Most Extreme Air-Cooled PC You’ll Ever See Involves A Miniature Wind Tunnel | Gizmodo Australia

Very cool idea and setup. My only concern would be how do you control the dust. That much air moving has to produce a bunch. You might try incorporating window A/C unit type filter material. Catches most of the dust with very little air flow loss. I use them on my “closet” setup with no cases. Just a thought.

Thanks! 🙂

The dust issue was definitely something I was worried about. As you said, moving a ton of air was going to mean a ton of dust too. I think the window A/C unit filter you suggested is exactly what I ended up with, though I’m not sure. haha. I ended up buying these filters that go over window box fans, they are kind of like a stretch nylons material, seem to work great. The restriction to flow is minimal and they seem to catch the dust pretty well.

Thanks for the idea though, I’m gonna look them up and see if they are similar or possibly better than what I have now. I’ll have to add air purifier to the list of things that the wind tunnel seems to be good at, the other being heating my basement. 🙂

Wouldn’t it be cheaper in terms of running cost to just water cool it? I have a similar system with a 3960x running at 5GHZ and my max temps are around 80 on the cpu and 50 on the GPUs. Much quieter too. Awesome build and cause though!

Thanks!

It might be cheaper, honestly I didn’t really spend a ton of time investigating the water cooling option. I have a computer thats water cooled that I tinker with and its definitely fun.

But I was really interested in how far I could push air cooling, and I’ve been interested in this wind tunnel concept for a long time. So I really wanted to build the wind tunnel as a way to test different air cooling setups.

The wind tunnel is surprisingly quiet, its only about 65-67 decibels right at the inlet, and standing in front of it you can’t hear it that much at all. The tunnel itself seems to really quiet the noise down, and the inlet noise isn’t that bad because the fan is set inwards a bit inside the intake section. Also the single large fan can operate at a pretty low rpm and still generate a bunch of airflow, so that helps keeps things quiet.

In comparison my Lego Folding Farm is about 75-77 decibels with its four 140mm intake fans. So it’s all you can hear in my basement. haha

I’ve still got a lot of tests to do from here. I plan to delid the processor and then push the overclocks on the CPU hopefully into the 4.8-5.0 range. Then I’ll move to the GPU and see how far I can push them.

How much did it cost to build this?

The computer components themselves cost right around $1,200 dollars. Mind you I bought one of the 7970 cards for $50 used because it didn’t work. It had a borked 8 pin power connector, it was busted at the solder joint and had issues. So that saved me a bunch of money buying a used 7970 and fixing it.

That $1,200 includes the monitor and the keyboard as well.

The wind tunnel itself cost roughly- $150

Fan- $20

Aluminum Edging- $50

Scrap Aluminum (Monitor Stand)- $5

Scrap Alum/Steel Tubes (Top Decoration) -$5

Box of 10-24 Screws- Already had

Lexan Piece- $15

MDF Board- Already had, leftover from different project

Paint- Leftover from Buster Sword project

LED Gauges- $25

Switches- $30 ($5 a piece)

Thank you so much. How many points does this push out?

No problem.

On average around I get around 240,000-260,000 BOINC points a day

or

1,650,000-1,800,000 WCG points a day

This looks like a fun project. Since the goal was to have this running 24/7 I’m curious about the total power consumption. It looks like you measured the power of various fans, but what about the GPUs, CPU, motherboard, monitor, etc., and everything all combined?

Yes, power numbers. I omitted them at first because I didn’t have finalized numbers. My kill-a-watt died (read- was crushed by friend I let borrow it) midstream during the build and I didn’t get around to gathering updated power numbers on the whole setup. I’ve got a new kill-a-watt on the way though and hope to have updated numbers within the week.

The first initial numbers I had were in the 510-515 watt area, but I changed a lot of settings since then and with the changes I wanted to see what my new readings were. I’ll post them when I get the updated numbers. I plan to check power numbers at the current overclocked settings, and then at the maxed out overclocked settings.

Finally got a new Kill-a-watt and was able to get some new numbers based on my current settings. The system in its current state of overclock is pulling right around 415 watts at the wall. This is without the monitor. The monitor measures around 40 watts.

Based on the 415 watt reading at the wall and looking up some tests on the efficiency of the power supply it looks like it should be in the 91-92% efficiency area at that load. That would put me around 380 watts at the PSU.

Pingback: Projekt mit Herz - Computer im Windkanal gegen Krebs

Pingback: Today’s Links January 4, 2013

This is absolutely fantastic. You have inspired me to help contribute to the fight. My 3 year old has cancer and we are fighting it every way we can. Although my computer is not as powerful as your’s, I will make sure to add it to the fight. I had no idea you could help out with just leaving your computer on.

Thank you for all your hard work.

Thank you, I very glad to hear that this could help to inspire. 🙂

Any computing power you can bring online greatly helps the cause, no matter how big or how small. It’s when all of us work together that we can have the greatest impact. If you need any assistance or help with adding your computer feel free to email me and I would be glad to help you.

I wish you all the best in your families fight with cancer.

A very noble, and cool project!

Looking at your setup, I was wondering if you had tested the airflow without the PSU in the tunnel? I would think the PSU’s fan would be adequate to keep it cool, so you could move it out of the tunnel and hopefully see better airflow over the remaining components.

Very inspiring, I will have to see what systems I can cobble together for the cause.

Thank you! Appreciate it. 🙂

I did test out the airflow with the PSU in the tunnel and out of the tunnel. I was curious of the same thing. I moved the PSU around and tested it in various positions in the tunnel and out of the tunnel to try and see what effect there was on airflow. I found that as long as I had about an inch or two worth of space between the PSU and the back of the motherboard that the airflow was unaffected.

My plans of adding a second system down the road was why I was interested in seeing if I could move the PSU out of the tunnel. The PSU will be moved back farther when I add the second system in place.

Any systems or processing power you can bring online is a help for the cause. 🙂

Amazing project! I am seriously inspired by the time and effort, let alone the ingenuity that has gone into this project.

I did have one thought though … if you are bothered by the noise of the fan, or wanted a quieter alternative, have you thought about investing in a serrated fan instead to decrease the noise output. Recently saw it on a TV program about how animals inspire invention and apparently the noise reduction by replicating an owls wing is very significant.

Good luck with the second PC – keep up the good work.

Thanks!

Good thought on the fan blade design. Something I’ll definitely look into, sounds like an interesting concept, and I’m always up for some more testing. 🙂

Currently you can’t really hear the wind tunnel all that much because the Lego Folding Farm is loud! It drowns everything out. haha

That is awesome. It has totally inspired me to use my 7950 to help fight cancer on the night time. The whole concept of your computer being a charitable and life saving tool is really inspiring.

As others have said, I find it quite contradictory to have such a huge volume of air flowing through the case, and still running the CPU and GPU, and PSU fans. Effectively all the big fan is doing is cooling the SSD and memory (and adding noise).

If you are serious about cooling you may want to consider a custom water-cooling loop. That seems to be the way to go if you are looking for the last % of performance. I guess that is penciled in for the next project?

Thanks! Adding you 7950 to the fight will definitely help!

Yeah, the cooling fans were left on the respective components for testing. I was very interested in what the airflow looked like around the components themselves with the factory coolers in place. I did a bunch of smoke testing to see just what the airflow patterns looked like around the coolers at various airflow speeds.

Turning the big fan off results in a rise in temperatures across all the components. I tested this a couple of different ways. The airflow around the coolers doesn’t seem to care at lower tunnel speeds. Small vortices are created at the coolers, but they seem of minimal impact.

With the system running under full load 24/7 I have found it better to cool everything. I’m a big proponent of cooling the PCBs and all the VRM, MOSFET,chips and circuits. This is part of the reason I wanted to be able to run higher speed air and larger volumes of air over everything that had electricity running through it. With my goal of pushing everything as far as I could I wanted everything to be actively cooled.

I’ve got a different computer that I play with that’s water cooled. It’s fun to play around with for sure. But for this project I wanted to see how far I could push air cooling. I do plan to do some passive heatsink testing though, I’m curious of the results.

First step though is delid the processor, then push the overclocks as high as they’ll go. 🙂

if running in a normal office enviornment, how you keep the dust out? with that amount of air moving through, i bet it would require weekly dust removal. you need filter.

I think this is totally cool! One question though: have you thought about dust and filtering? Maybe you could even use a standard home furnace filter…

Thank you!

Yes, dust and filtering were a consideration. I actually looked into the option of using a home furnace filter at first. But it seemed to be too restrictive. So after some more looking I found that they make box fan screened filters. They are like a pantyhose kind of material. They come on box fans meant for use in windows to prevent sucking in outside air that might be dirtier or dustier. I bought one of these and stuck it on the fan and it works great. Little to no restriction, but it seems to filter dust just fine.

Pingback: Wind tunnel PC case pushes the air cooling envelope, does its thing for cancer research | eMagility :: defining mobile agility

Pingback: Ein Windtunnel zur Hardware-Kühlung. Man kann es auch übertreiben - GIZMODO DEGIZMODO DE

Hello sir,

I cannot tell from your blog, but are you “pushing” or “pulling” the air through the wind tunnel? your drawing seems to indicate that you are pushing the air through. I suggest flipping the fan and pulling the air through the wind tunnel (air now enters through the smaller hole and exits at the fan) so you get smoother airflow and most likely higher efficiency.

overall, your project looks very well done. Nice job

Thank you!

I did some experiments in the push/pull configuration with the fan. The pull configuration (drawing air through the tunnel) resulted in less turbulence when running. This was more apparent at higher fan speeds. At lower speeds it didn’t seem to show up as much.

When I switched to the pushing configuration I had more turbulence in the air. So I added some tubes on the other side of the fan that helped with straightening the airflow out. This helped with my turbulence issues. It’s a small loss in efficiency as you point out though, because the drag from the tubes consumes a slight amount of airspeed.

If I were doing aerodynamic testing of any kind than I definitely would have switched the fan orientation and done a pull configuration. But because I’m just looking for airspeed and air volume I was okay with the small trade in efficiency from using the tubes to straighten the air, versus having the fan blowing out of the tunnel. The only reason being noise in that case. The fan is noisier on the outlet side, the inlet side is a little quieter, so i wanted the inlet side of the fan facing out.

Thanks for your feedback. I’m always looking for tips or further testing ideas. 🙂

Just curious. If your main cooling fan dies (all fans die) what provisions do you have in place for a graceful shutdown?

The system is monitored using PC monitor software. It lets me remotely keep tabs on things and is setup to send alerts if certain criteria are met (fan speeds, temperatures, etc)

If temperatures pass a certain threshold the system is setup to auto-shutdown, first by killing Boinc and then by shutting down the PC completely. To date though I’ve never had the system need to auto-shutdown.

Also, one more thing. Have you thought of relocating your power supply to outside of the wind tunnel? This should not need the extra cooling and seems like it would represent a large impact on air flow.

Finally, this build is fascinating. I am seriously considering doing something like this (based on your design). I was considering adding a air furnace hepa filter to the design. Suffering from allergies I can clean the air in my home – and help out at the same time. 🙂

Yes, I initially tested airflow with the Power Supply in a couple of different positions, but it didn’t seem to have much effect. As long as I left enough of a gap between the power supply and the other components it didn’t seem to have an effect on airflow. When the system is fully loaded the Power Supply is under a fair amount of load, so I wanted to keep a supply of air going to it just to keep it cooled.

Thanks! Glad you found it interesting. And yes, you could add a filter to the inlet side. Based on what I found with filters I don’t think you could get away with a full furnace filter if you were just using a standard box fan. The airflow will drop quite a bit with a full filter like that. I used just a box fan filter, which is kind of like a pantyhose material that stretches over the fan, it’s really light and just catches the dust, but it’s definitely not HEPA. If you were going to use a HEPA filter I would suggest using a slightly more powerful fan. 🙂

Hi,

I’m just wondering why you didn’t use passive cooling blocks for the cpu/gpu instead of the ones you’re using now. I think the fans on the current ones might block the airflow?

Nice job though! I downloaded and installed the software myself thanks to your article!

Thanks! Glad you joined! The more power we can throw at the grid the faster we can return results and help contribute. 🙂

I was at first predominantly interested in seeing how the airflow at various speeds would react around the existing coolers on the GPU/CPUs. So I tested at various speeds, 5-10MPH all the way up to 25+ MPH using smoke to show the patterns. At lower speed there are a lot of small vortices that form around the fan/cooler areas, but for the most part the air seems to just push through, and around the coolers.

At higher speeds though things get more interesting, the small little vortices will disappear for moments and then reappear as much larger vortices. But this only seems to happen at very specific air speeds. Moving just above or below those speeds causes them to go away.

There is a lot of turbulent air at very high speeds near the flat areas, where air has to move around the object. But it seems to have no ill effect on cooling. At very high speeds the fan RPMs on the CPU cooler actually overspins and shows up as RPM spikes. I assumed this was from air moving into the fan at a faster speed than it was drawing air in?

I do plan on removing the active coolers though and doing a bunch of testing with passive coolers. I want to delid the CPU first though as currently I think the factory heatspreader in acting as a thermal barrier and limiting heat transfer to the cooler itself, thereby limiting the effectiveness of air cooling it.

Further testing!

Thank you for the answer, looking forward to some more testing!

Pingback: – Wind tunnel PC case pushes the air cooling envelope, does its thing for cancer research

Pingback: Wind tunnel PC case pushes the air cooling envelope, does its thing for cancer research | tekifeed.com – Gadget Feeds, Gadget News and more!

From one gadget geek to another. This is way cool! And for the sake of saying it before someone else… But can it run Crysis? :p

Thanks!

haha… Crysis bows before it!

Though honestly I have no clue, I’ve never used it for gaming, its’ processing 24/7, so it’s always busy. Though I would hope with the hardware that Crysis would be playable at some pretty decent framerates. 😉

Pingback: Check It Out: A PC Cooled Off, By A Wind Tunnel | LaptopMemo | Tech & Then Some

Pingback: The wind tunnel computer that hopes to conquer cancer | Padroni.net

AWESOME !

A link to your WCG profile ?

second that

Here is a link to my user profile at BOINCstats-

http://boincstats.com/en/stats/15/user/detail/733754

Here is a link to the Wind Tunnel Computer at BOINCstats-

http://boincstats.com/en/stats/15/host/detail/2231848/overview

If you’re interested in other info let me know! 🙂

thanks 🙂

Pingback: The wind tunnel computer that hopes to conquer cancer - 360tech.in

Pingback: This Computer Is Cooled By a Wind Tunnel | Backup Rss

Pingback: This Computer Is Cooled By a Wind Tunnel | List Backup

Pingback: » Wind tunnel PC case pushes the air cooling envelope, does its thing for cancer research Gamez Menu

Pingback: Wind tunnel PC case pushes the air cooling envelope, does its thing for cancer research | Google Android news and more!

Pingback: Wind tunnel PC case pushes the air cooling envelope, does its thing for cancer research | 1v8 NET

Pingback: Wind tunnel PC case pushes the air cooling envelope, does its thing for cancer research | Daily News! Blogger International

Pingback: Wind tunnel PC case pushes the air cooling envelope, does its thing for cancer research | Tips for the Unready

Pingback: Wind tunnel PC case pushes the air cooling envelope, does its thing for cancer research | Top Technology News

Pingback: » Wind tunnel PC case pushes the air cooling envelope, does its thing for cancer research » webaligns

Pingback: Wind tunnel PC case pushes the air cooling envelope, does its thing for cancer research - FourTech Plus

Pingback: Wind tunnel PC case pushes the air cooling envelope, does its thing for cancer research | The Worlds Tech Blog

Pingback: Wind tunnel PC case pushes the air cooling envelope, does its thing for cancer research | H Tanalepy

Pingback: Wind tunnel PC case pushes the air cooling envelope, does its thing for cancer research ← techtings

Pingback: The wind tunnel computer that hopes to conquer cancer | CD DISK

Pingback: Guy Builds Wind Tunnel Computer to Help Cure Cancer